I'm a reservoir engineer, not a sysadmin. But over the course of a couple of days I designed and built a GPU compute cluster for Ridgeline Engineering from the ground up. Here's what that process actually looked like — and why it was worth it.

The Goal

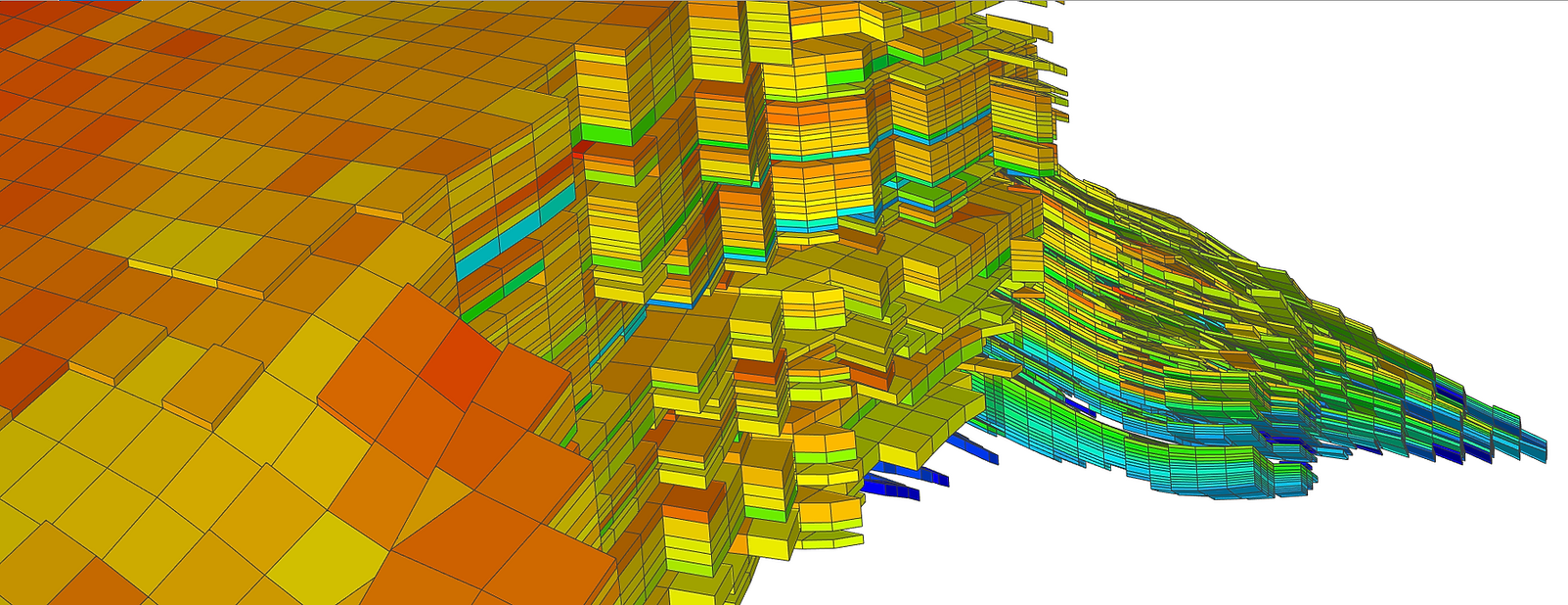

The objective was straightforward: free up my workstation and give other engineers and geoscientists at Ridgeline access to real compute power for reservoir simulation workloads. When you're running GPU-accelerated simulators, tying up a workstation for hours isn't sustainable — especially when multiple team members need access.

The Hardware

The cluster runs NVIDIA RTX 6000 Ada and RTX 6000 Blackwell professional GPUs, managed by a virtualized head node handling job dispatch and shared storage via NFS over a dedicated 10GbE backbone. We chose professional workstation cards over consumer alternatives deliberately — fewer, larger-memory GPUs means fewer domains and less communication overhead when running MPI-distributed simulations.

The Software Stack

Intel MPI handles domain decomposition across GPUs and nodes. SLURM manages all job scheduling — priority queuing, GPU-aware resource allocation, and automatic dispatch to available compute nodes. The system is designed to scale: adding a new node is as simple as mounting the NFS share, copying the SLURM and Munge configs, and registering it.

What I Actually Learned

It was a couple of days of concentrated trial and error. Configuring NFS exports, debugging MPI communication between nodes, getting SLURM's GPU resource detection working with heterogeneous cards, setting up Munge authentication. None of it was plug and play. Every layer had its own learning curve and most of those hours were spent reading documentation and troubleshooting failed service starts.

But that's exactly the point. Understanding your compute infrastructure at this level changes how you think about simulation workflows. You know what's actually happening when a job runs, where the bottlenecks are, and how to optimize for your specific workloads.

The Takeaway

If you're an engineer thinking about building compute infrastructure instead of renting cloud time, do it. The learning curve is steep but the payoff is real. Faster turnaround on complex reservoir studies — whether that's CCUS feasibility modeling or field development planning — makes a measurable difference in what you can deliver to clients. And you come out the other side understanding your tools at a much deeper level.